A humanoid robot has learned to lip-sync with human speech using artificial intelligence, offering a glimpse into more natural-looking robots in the future. Developed by researchers at Columbia University, the system allows the robot to move its lips in time with spoken sounds without relying on manually programmed instructions.

The robot was trained in stages. First, it learned how its own face works by observing itself and testing different facial movements. It then studied videos of people speaking, enabling it to match sounds with realistic mouth shapes and movements. This approach allows the robot to generalise across languages and speaking styles.

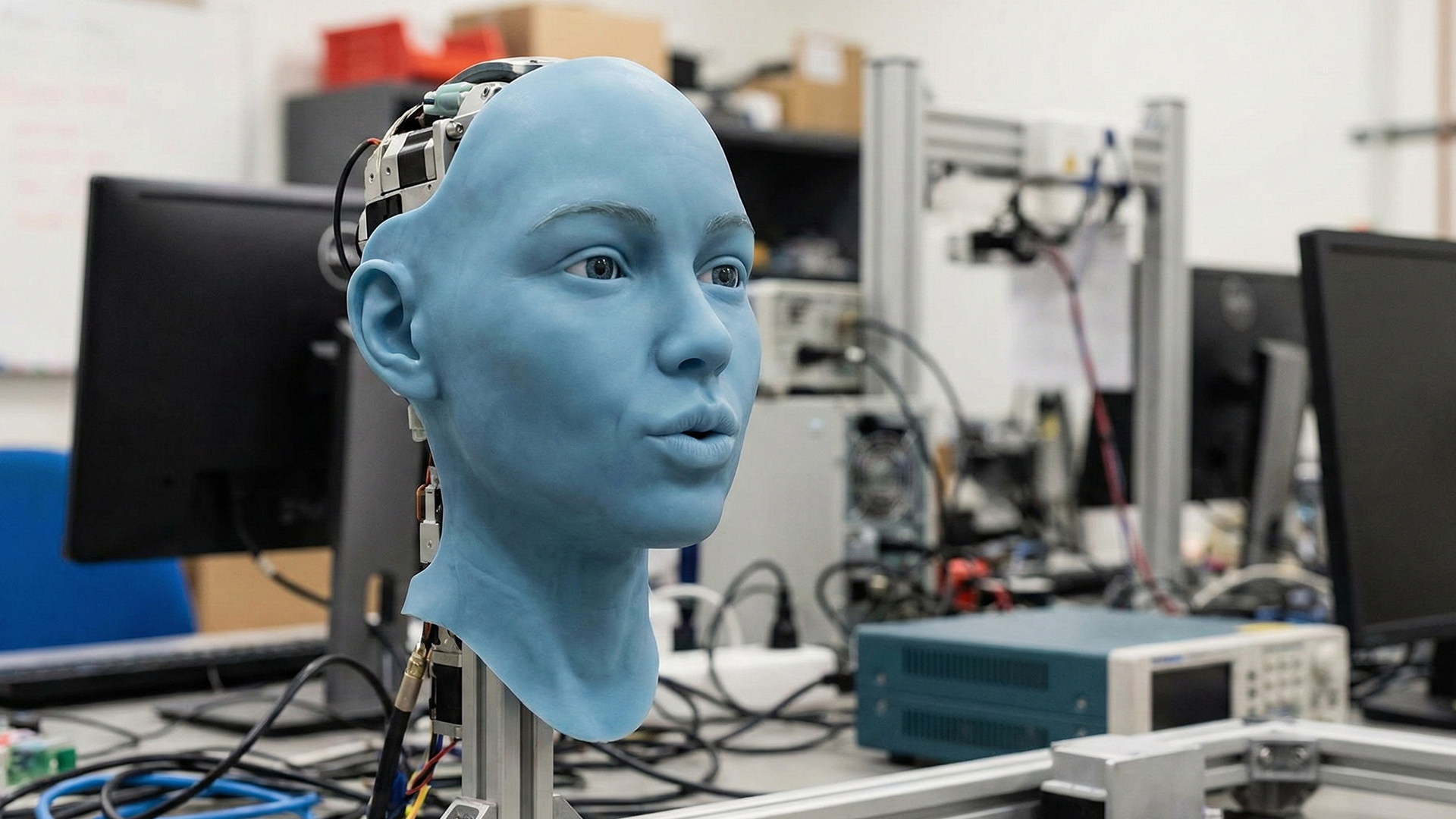

With 26 facial motors controlling its expressions, the robot uses AI to translate audio directly into coordinated lip movements. The result is smoother and more accurate lip-syncing compared to earlier humanoid robots, which often appeared stiff or unnatural.

Researchers say this capability is important for improving human-robot interaction, as people respond better to machines that look and behave more naturally. Possible applications include teaching, assistive care, and interactive media.

Although the technology is still developing, scientists believe it marks an important step toward expressive, socially aware robots that can communicate in ways humans find familiar and comfortable.

Also Read: Parasakthi team joins PM Modi for Pongal festival